🎯 Quick Guide Summary

AI-generated content can be detected through manual linguistic analysis and specialized detection tools. This guide covers both approaches, teaching you to identify AI writing patterns and use tools like GPTZero and Originality.ai effectively.

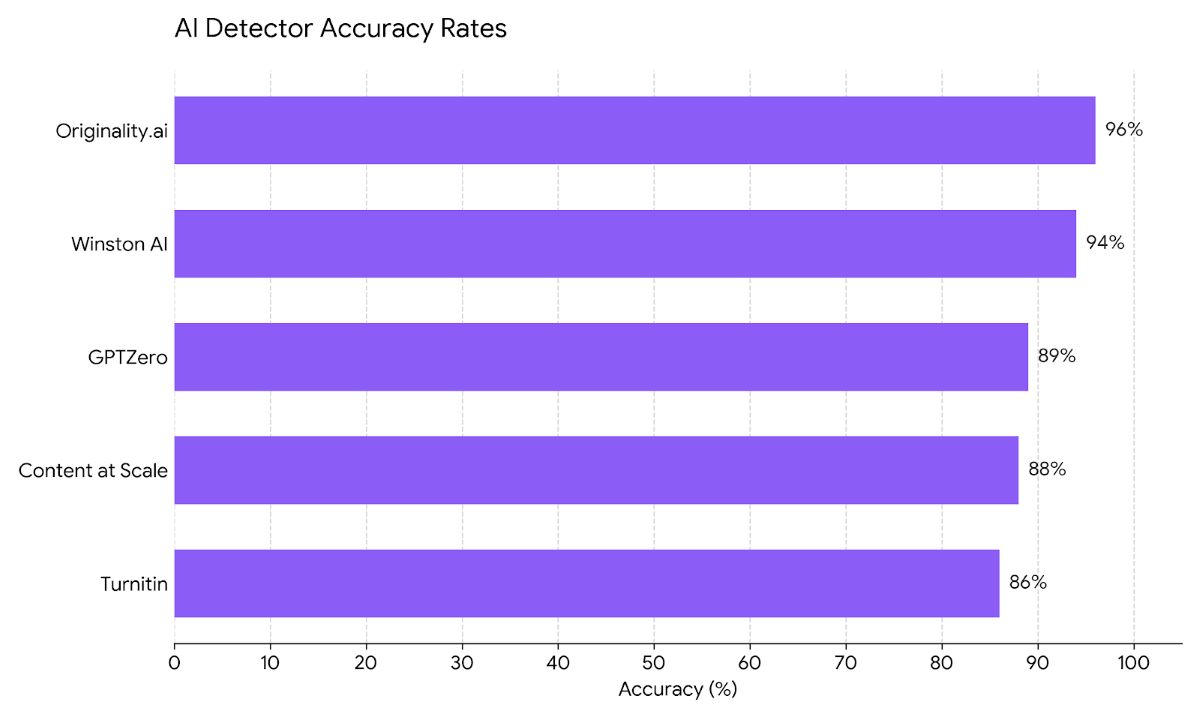

- ✅ Detection Accuracy: 85-96% with proper tools and methods

- 🔍 Manual Methods: Pattern recognition, depth analysis, style assessment

- 🤖 Best Tools: Originality.ai (96%), GPTZero (89%), Winston AI (94%)

- 💰 Free Options: GPTZero (1K words/month), Content at Scale (unlimited)

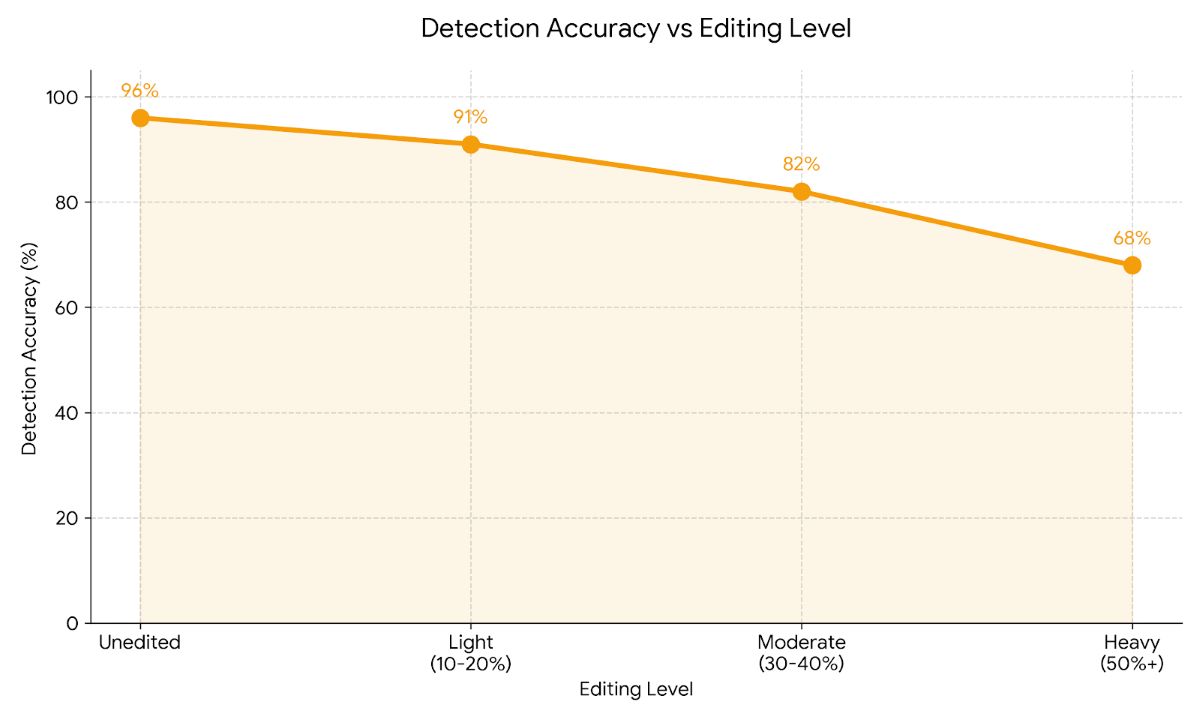

- ⚠️ Challenge: Heavily edited AI content harder to detect (65-82% accuracy)

Understanding AI-Generated Content

Before diving into detection methods, let’s clarify what we’re actually looking for. AI-generated content refers to text created by large language models like ChatGPT, Claude, Gemini, or similar systems. These tools use machine learning trained on massive datasets to generate human-like text in response to prompts.

The challenge? Modern AI has become remarkably good at mimicking human writing. Gone are the days of obvious robotic phrasing and awkward grammar that made early AI writing easy to spot. Today’s AI produces grammatically correct, contextually appropriate, and often quite sophisticated text that can genuinely fool readers.

What Makes AI Content Different?

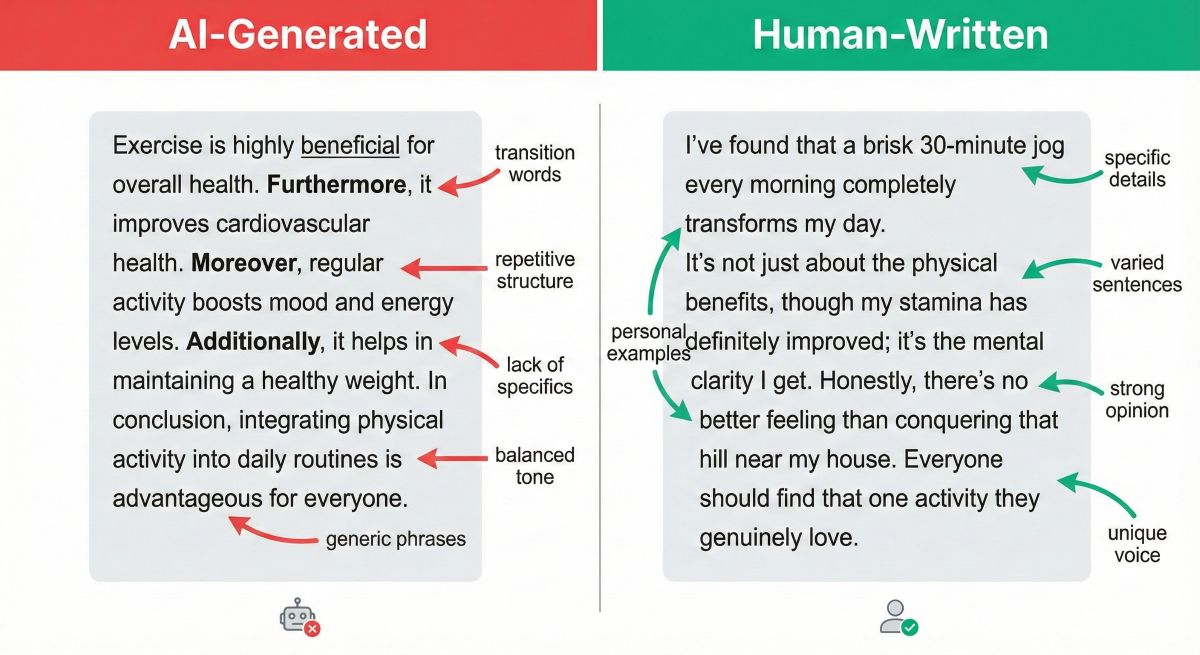

Despite improvements, AI-generated text maintains certain characteristics that distinguish it from human writing. Think of it like this: if you’ve ever read something that felt slightly “off” without being able to pinpoint exactly why, you might have encountered AI content. The text flows smoothly and makes logical sense, yet something about the voice or perspective feels generic or detached.

AI systems excel at synthesis—combining information from their training data into coherent responses. They’re essentially very sophisticated pattern-matching engines. What they can’t do (yet) is draw from genuine personal experience, develop truly original insights, or demonstrate the kind of contextual understanding that comes from living in the world as a human being.

Common AI Writing Patterns

Most AI writing shares recognizable patterns. You’ll notice repetitive sentence structures where each paragraph follows a similar formula. Transition words like “moreover,” “furthermore,” and “in addition” appear with unusual frequency. The writing tends to maintain consistent quality throughout without the natural variations you’d see in human work—no moments where the writer gets passionate about a point or briefly loses focus on a tangent.

Here’s something I’ve observed repeatedly: AI loves balance. If it presents a positive aspect, it almost always includes a balancing negative aspect. Ask AI about any technology, and you’ll get both benefits and drawbacks presented with diplomatic neutrality. Real human writers, especially those with expertise, tend to have stronger opinions and less perfectly balanced perspectives.

Types of AI-Generated Content

Understanding different categories of AI content helps with detection because each presents unique challenges.

Completely AI-Generated: Text produced entirely by AI without human editing. This is easiest to detect with both tools and manual analysis, typically showing 94-97% detection accuracy.

AI-Assisted: Human writing enhanced with AI suggestions for phrasing, grammar, or structure. Detection becomes harder here since the core ideas and voice remain human even if some sentences get AI polish.

Hybrid Content: Mix of AI-generated sections and human-written sections. This intentional blending aims to evade detection by diluting AI patterns with authentic human writing.

Edited AI Content: AI-generated text that humans have substantially revised, adding examples, changing phrasing, and inserting personality. When done well, this becomes very difficult to detect reliably—accuracy drops to 65-82% depending on editing extent.

Why Detecting AI Content Matters

You might wonder whether detecting AI content is actually important. After all, if the text is helpful and accurate, does the source matter? The answer depends on context, but there are several legitimate reasons why detection matters across different fields.

Academic Integrity

Educational institutions face unprecedented challenges with AI writing tools. When students submit AI-generated essays as their own work, they’re not developing critical thinking, research, and writing skills that education aims to build. More concerningly, they’re misrepresenting their own abilities and understanding.

I want to be clear: using AI as a learning tool—to explain concepts, provide examples, or check grammar—is different from submitting AI-generated assignments. The issue isn’t AI assistance itself but rather claiming AI work as authentic demonstration of learning.

Content Quality and Authenticity

Publishers, bloggers, and content creators care about detection for business reasons. AI-generated content often lacks the depth, personal experience, and unique perspective that distinguishes valuable content from generic information anyone could generate. When hiring freelance writers, clients want to verify they’re getting authentic human expertise rather than AI output passed off as original work.

There’s also the SEO consideration. While Google claims AI content isn’t automatically penalized, their emphasis on E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) suggests content demonstrating genuine first-hand experience ranks better. Purely AI-generated content typically can’t provide that authentic experiential element.

Professional Standards

Certain professions require human oversight and accountability that can’t be delegated to AI. Legal documents, medical information, financial advice—these need verified expertise and carry liability implications. Detecting AI content ensures appropriate human review has occurred.

Misinformation and Accuracy

AI systems sometimes “hallucinate”—generating plausible-sounding information that’s actually false. They can cite non-existent studies, invent statistics, or misrepresent facts with complete confidence. Detection helps identify content requiring extra fact-checking and verification.

Contractual and Employment Issues

When businesses hire writers, designers, or consultants, contracts typically assume human work unless explicitly stated otherwise. If a freelancer submits AI-generated deliverables while charging human rates, that creates legitimate ethical and contractual concerns. Detection protects both parties by clarifying what’s being delivered.

Manual Detection Methods

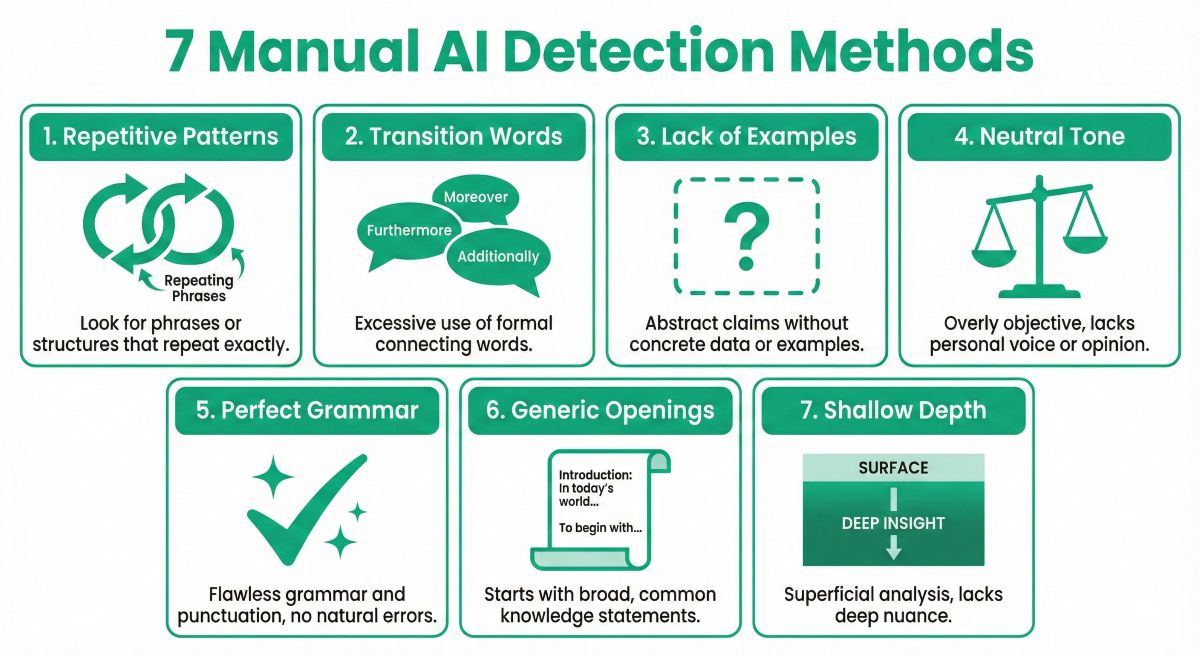

Before reaching for detection software, you can often identify AI content through careful reading and analysis. Manual detection requires practice but offers insights automated tools sometimes miss. Here’s what to look for.

1. Repetitive Sentence Structure

AI tends to fall into formulaic patterns. You’ll notice sentences frequently follow the same structure: subject-verb-object, often starting with transitions or qualifiers. Human writers naturally vary their sentence construction—some short, some complex, some starting with dependent clauses or questions. AI writing maintains more consistent patterns.

Try reading several paragraphs aloud. Does the rhythm feel monotonous? If every paragraph starts with a topic sentence, develops with 2-3 supporting points, and concludes with a summary statement, you’re probably looking at AI output. Human writing is messier.

2. Overuse of Transition Words

Count how many times you see “moreover,” “furthermore,” “additionally,” “in addition,” “however,” “nevertheless,” or “consequently.” AI systems use these transitions heavily to connect ideas. While human writers use transitions too, we do so more sparingly and with greater variety.

I’ve read AI-generated articles where nearly every paragraph begins with a formal transition. Human writers often use simpler transitions (“but,” “and,” “so”) or connect ideas without explicit transitions at all, trusting readers to follow logical flow.

3. Lack of Specific Examples

This is perhaps the most reliable manual detection method. AI systems speak in generalities because they can’t draw from personal experience or access specific real-world examples unless those exist in training data.

When you read claims like “many experts believe” or “studies have shown” without naming specific experts or studies, that’s a red flag. Similarly, vague examples (“a successful company might implement this strategy”) rather than concrete cases (“when Spotify implemented this approach in 2022, they saw 40% improvement”) suggest AI authorship.

Ask yourself: Could this have been written by someone without direct knowledge of the topic? If the answer is yes, and the piece lacks any unique insights or specific references, you’re likely looking at AI content.

4. Balanced, Neutral Tone

AI is trained to be helpful and harmless, which often results in carefully balanced perspectives that avoid strong opinions. Every point gets a counterpoint. Every advantage comes with a mentioned disadvantage. The writing feels diplomatic to the point of being bland.

Real human experts, especially when passionate about their field, show preferences and biases. They might argue forcefully for one approach over another based on their experience. AI typically won’t take strong stands unless explicitly prompted to do so.

5. Perfect Grammar Without Personality

AI produces technically correct writing but often lacks stylistic quirks that make human writing distinctive. You won’t find sentence fragments used for emphasis. Unlikely to see unusual word choices or creative metaphors. The writing is clean, polished, and somewhat sterile.

Human writers make deliberate stylistic choices—starting sentences with “and” or “but,” using parenthetical asides, varying paragraph length dramatically, incorporating humor or sarcasm. AI writing tends toward formal middle-ground that offends no one and delights no one.

6. Generic Openings and Conclusions

Pay special attention to introductions and conclusions. AI often opens with broad context (“In today’s digital age…”) or obvious statements that say nothing specific. Conclusions tend toward summary and generic call-to-action rather than memorable final thoughts.

Compare these approaches:

AI typical: “In conclusion, detecting AI-generated content is important for maintaining quality and authenticity in various fields. By using the methods discussed above, readers can effectively identify AI writing.”

Human typical: “Look, detecting AI writing isn’t about catching people cheating—it’s about preserving the authenticity that makes content worth reading in the first place.”

7. Inconsistent Knowledge Depth

AI excels at surface-level synthesis but struggles with deep domain knowledge. Read carefully for logical inconsistencies or shallow treatment of complex topics. Sometimes AI will confidently state something incorrect or contradictory because it’s pattern-matching rather than truly understanding.

Domain experts can often spot AI content in their field because the writing demonstrates knowledge without understanding—it gets facts mostly right but misses nuances, conflates related but distinct concepts, or fails to engage with current debates in the field.

AI Detection Tools Comparison

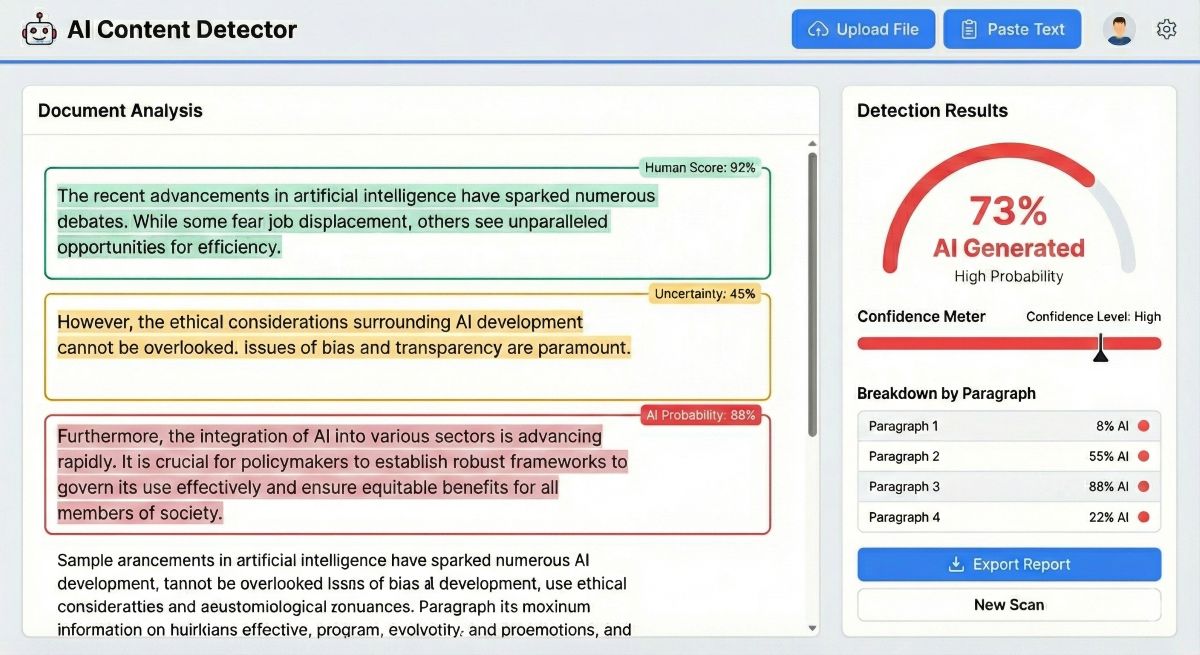

While manual methods provide valuable insights, detection tools offer algorithmic analysis that can catch patterns humans miss. Here’s how the major tools compare based on independent testing.

| Tool | Accuracy | Free Option | Paid Price | Best For |

|---|---|---|---|---|

| Originality.ai | 94-96% | ❌ | $15/mo | SEO professionals, publishers |

| Winston AI | 93-95% | ✅ 2K trial | $12/mo | All-purpose, multilingual |

| GPTZero | 88-92% | ✅ 1K/mo | $10/mo | Education, students |

| Content at Scale | 87-91% | ✅ Unlimited | Free | Casual checking |

| Turnitin | 85-88% | ❌ | Institution only | Universities, schools |

For a detailed comparison of Originality.ai, GPTZero, and Turnitin, read our updated review. This will give you more insight into these three key tools

Tool Selection Guide

For Professional Content Verification: Originality.ai offers highest accuracy (94-96%) with additional features like plagiarism detection and site-wide scanning. Worth the $15/month investment if you’re checking client work, managing freelance writers, or publishing content where accuracy matters for business outcomes.

For Educational Use: GPTZero provides free tier with 1,000 words monthly and paid plans starting at $10/month. Purpose-built for teachers and students with Canvas LMS integration. The 88-92% accuracy is sufficient for most classroom applications.

For Casual Checking: Content at Scale offers completely free unlimited scans with 87-91% accuracy. No account required. Perfect for occasional spot-checks where you don’t need highest reliability.

For Multilingual Content: Winston AI supports 5 languages with 93-95% accuracy. Good balance of accuracy and features at $12/month, though Originality.ai edges it out for English-only content.

For comprehensive tool comparison, see our complete guide to the best AI detectors.

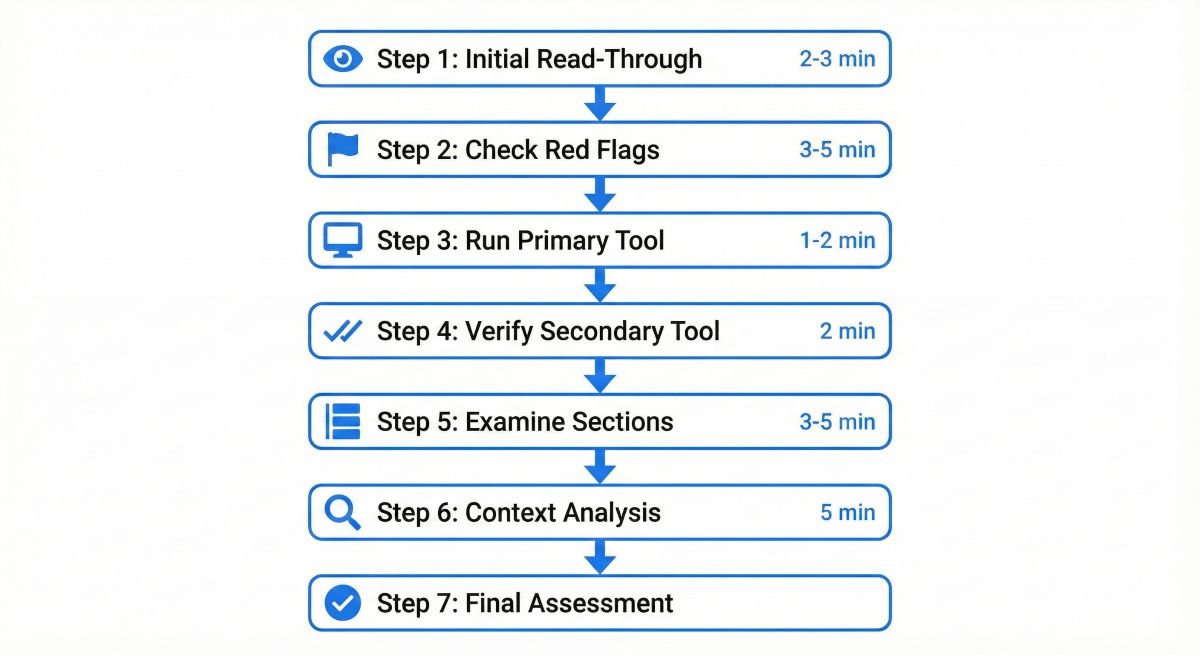

Step-by-Step Detection Process

Combining manual analysis with detection tools provides the most reliable results. Here’s my recommended workflow for thorough AI content detection.

Step 1: Initial Read-Through (2-3 minutes)

Start by reading the content normally without analyzing. Trust your gut reaction. Does something feel off? Do you sense generic quality or lack of genuine expertise? Make note of initial impressions before systematic analysis potentially biases your judgment.

Step 2: Check for Red Flags (3-5 minutes)

Scan specifically for AI indicators:

- Count transition words—more than one per 100 words suggests AI

- Look for specific examples with names, dates, numbers

- Check if any opinions or preferences are expressed

- Note paragraph structure consistency

- Identify any personal anecdotes or first-person experiences

Step 3: Run Primary Detection Tool (1-2 minutes)

Copy the text into your chosen detector. I recommend starting with the most accurate tool you have access to—Originality.ai if you have a subscription, or GPTZero for free checking.

The tool will provide a percentage indicating AI probability. Here’s how to interpret results:

- 0-20% AI: Likely human-written, though some AI assistance possible

- 20-40% AI: Mixed or heavily edited AI content

- 40-60% AI: Significant AI generation, possibly lightly edited

- 60-80% AI: Mostly AI-generated with minimal editing

- 80-100% AI: Almost certainly AI-generated

Step 4: Verify with Secondary Tool (2 minutes)

Don’t rely on a single detector. Run the same text through a different tool for comparison. If both tools show similar results (both high or both low), confidence increases. If results diverge significantly—one tool says 85% AI while another says 30%—that indicates uncertainty requiring deeper analysis.

Step 5: Examine Sections Separately (3-5 minutes)

Rather than analyzing entire document at once, test individual sections or paragraphs. This helps identify whether someone mixed AI-generated sections with human writing. You might find the introduction and conclusion are human-written while body paragraphs are AI-generated, or vice versa.

Step 6: Context Analysis (5 minutes)

Compare the content against other known work by the same author if available. Sudden quality improvements, style changes, or topic expertise beyond their usual range suggests AI assistance. For students, compare against in-class writing or previous assignments.

Step 7: Make Final Assessment

Synthesize all evidence:

- Manual red flags identified

- Detection tool scores from multiple tools

- Section-by-section variation

- Comparison with author’s other work

Be honest about confidence level. Sometimes evidence clearly indicates AI generation (multiple tools showing 80%+, obvious manual red flags). Other times results remain ambiguous—in those cases, acknowledge uncertainty rather than making definitive claims.

Best Practices for Accurate Detection

1. Use Multiple Detection Methods

Never rely exclusively on one approach. Manual analysis catches things tools miss, and tools detect patterns invisible to human readers. The combination provides significantly higher accuracy than either method alone.

2. Understand Tool Limitations

Even the best detectors achieve only 94-96% accuracy on unedited AI content. Accuracy drops to 65-82% on heavily edited text. False positives happen—approximately 4-8% of human-written text gets flagged incorrectly. Tools provide strong evidence but aren’t infallible proof.

3. Consider the Context

Detection confidence should factor in context. If a high school student suddenly submits college-level analysis of quantum physics without having discussed the topic in class, that context matters more than detection scores. Similarly, if a known professional writer submits work that triggers detection tools, investigate whether they’ve changed workflow or tools rather than immediately assuming deception.

4. Look for Hybrid Content

Sophisticated users blend AI and human writing to evade detection. Someone might use AI to generate a draft, then extensively edit by adding examples, changing phrasing, and inserting personal voice. Or they write the outline and key points themselves while having AI fill in connecting paragraphs. These hybrid approaches are harder to detect and may represent acceptable AI assistance depending on context.

5. Check Multiple Samples

Single sample detection is less reliable than analyzing multiple writing samples from the same source. Consistent patterns across samples strengthen conclusions, while variation suggests either inconsistent AI use or false detection.

6. Document Your Process

If detection matters for accountability (academic integrity, contract disputes), document your methodology thoroughly. Save detection tool screenshots with scores, note specific manual red flags, and record which tools you used. This documentation supports your conclusions if challenged.

7. Stay Updated on AI Developments

AI writing systems improve constantly. Detection tools must update their models to keep pace. What works reliably today may become less effective in six months. Stay informed about both new AI writing capabilities and detector updates.

8. Practice with Known Samples

Improve manual detection skills by practicing with text you know is AI-generated versus human-written. Generate content with ChatGPT, then practice identifying patterns. Compare against human-written articles in similar topics. Over time, you’ll develop intuition for AI writing characteristics.

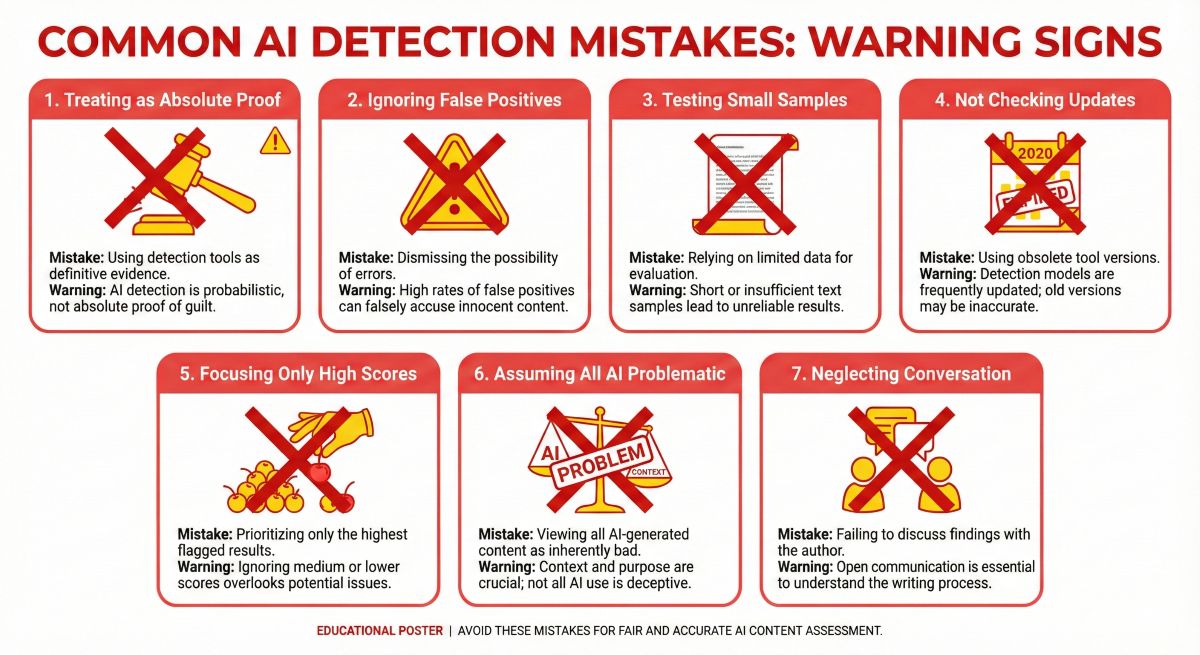

Common Detection Mistakes to Avoid

Mistake 1: Treating Detection as Absolute Proof

Detection tools provide probability, not certainty. A score of 92% AI probability means high likelihood, not definitive proof. False positives occur. Some human writing naturally exhibits patterns AI also uses—especially formal academic writing, technical documentation, or content following strict style guides.

If stakes are high (accusing someone of dishonesty, failing a student, terminating a contract), require additional evidence beyond detection scores alone.

Mistake 2: Ignoring False Positive Potential

Certain content types trigger higher false positive rates:

- Writing by non-native English speakers (may use formal, textbook-like phrasing)

- Technical documentation (structured, precise language resembles AI style)

- Academic writing (formal tone, careful balance, structured arguments)

- Content following strict style guides (consistency can appear AI-like)

Consider these factors before making accusations based purely on detection scores.

Mistake 3: Testing Too-Small Samples

Detection accuracy improves with text length. Very short passages (under 250 words) yield less reliable results. If possible, test at least 500-1,000 words for better accuracy. For shorter work, acknowledge reduced confidence in conclusions.

Mistake 4: Not Checking Tool Updates

AI models evolve rapidly. Detection tools trained on GPT-3.5 may struggle with GPT-4 or Claude 3 content until they update their models. Verify your detection tool has been updated recently to handle latest AI systems. Stale detectors lose accuracy over time.

Mistake 5: Focusing Only on High Scores

Some detectors show scores for individual sentences or paragraphs. Don’t cherry-pick highest scores while ignoring overall assessment. A document with three paragraphs at 85% AI and seven paragraphs at 20% AI isn’t the same as one with consistent 60% scores across all sections.

Mistake 6: Assuming All AI Use is Problematic

Detection tells you whether AI was likely involved, not whether that’s a problem. Context determines appropriateness. Using AI to check grammar or generate ideas differs from submitting entirely AI-generated work as your own. Focus on the ethical question of misrepresentation rather than AI presence alone.

Mistake 7: Neglecting the Conversation

When detecting potential AI use in situations requiring accountability, consider talking with the person involved before making accusations. They may have legitimate explanations—used AI assistance appropriately, employed an editor who relies on AI tools, or their writing style naturally resembles AI patterns. Conversation often reveals truth more effectively than tools alone.

Frequently Asked Questions

Can you reliably detect AI-generated content?

Yes, AI-generated content can be detected with 85-96% accuracy using a combination of detection tools and manual analysis. Tools like Originality.ai achieve 94-96% accuracy, while GPTZero reaches 88-92%. However, heavily edited AI content remains challenging to detect reliably. The most effective approach combines multiple detection methods rather than relying on a single tool.

What are the signs of AI-generated text?

Common signs include repetitive sentence structures, overuse of transition words like “moreover” and “furthermore,” lack of specific examples or personal anecdotes, generic perspectives without unique insights, perfect grammar without stylistic quirks, balanced phrasing without strong opinions, and consistent paragraph lengths. AI writing often sounds polished but lacks the natural variation and specificity of human writing.

Can AI detectors be fooled?

Yes, AI detectors can be fooled through several methods: substantial editing and rewriting, adding personal anecdotes and specific examples, varying sentence structure and length, incorporating deliberate stylistic choices, and blending AI-generated sections with human writing. Detection accuracy drops from 94-96% on unedited AI content to 65-82% on heavily edited content. Sophisticated users can reduce detection probability with enough effort.

What is the most accurate AI detector?

Originality.ai is currently the most accurate AI detector with 94-96% detection rates in independent testing. GPTZero follows at 88-92% accuracy, Winston AI at 93-95%, and Turnitin at 85-88%. However, accuracy varies based on content type, editing level, and AI model used. Using multiple detectors provides the most reliable results. See our complete AI detector comparison for detailed analysis.

Is there a free way to detect AI content?

Yes, several free AI detection options exist: GPTZero offers 1,000 words free monthly with 88-92% accuracy, Content at Scale provides completely free unlimited scans with 87-91% accuracy, and Copyleaks has a free plan with limited scans. Manual detection through linguistic analysis is also free but requires practice and experience to develop reliable judgment. For occasional checking, these free options work well.

For more Free AI tool options read the Best Free AI Tools: 20+ Actually Good Tools (No Credit Card)

How do teachers detect AI writing in student work?

Teachers detect AI writing through multiple approaches: comparing submission to student’s known writing style and ability level, using detection tools like GPTZero or Turnitin, looking for sudden quality improvements or topic knowledge beyond classroom discussion, checking for generic responses lacking assignment-specific details, and conducting oral follow-up questions to verify understanding. Most effective detection combines these methods rather than relying solely on tools.

Read more in AI Detectors vs AI Writing Detectors in 2026: What’s the Difference?

Conclusion

Detecting AI-generated content has become an essential skill across education, publishing, business, and creative fields. While perfect detection remains impossible—particularly with heavily edited AI content—combining manual analysis techniques with modern detection tools provides 85-96% accuracy in most cases.

The key takeaway from this guide? Don’t rely on a single method. Manual detection catches subtle indicators that tools miss, while algorithmic analysis identifies patterns invisible to human readers. Together, these approaches create robust verification process.

Remember that detection serves different purposes in different contexts. Academic settings focus on learning and integrity. Professional contexts emphasize quality and authenticity. Understanding your specific reason for detection helps determine appropriate response when AI content is identified.

As AI writing systems continue advancing, detection will become simultaneously more important and more challenging. Stay informed about new developments in both AI generation and detection technology. Practice your manual detection skills. Use reputable, updated detection tools. And maintain perspective—the goal isn’t catching people but rather ensuring authenticity and appropriate use of powerful tools.

For most accurate results, we recommend:

- Professional use: Originality.ai (94-96% accuracy, $15/month)

- Educational use: GPTZero (88-92% accuracy, free tier available)

- Casual checking: Content at Scale (87-91% accuracy, completely free)

Combine these tools with the manual detection techniques covered in this guide for the most reliable AI content detection possible with current technology.

Related Articles

- Best AI Detectors: Top Tools to Identify AI-Generated Content

- GPTZero Review: Best Free AI Detector for Education

- Originality.ai Review: Most Accurate AI Detector for SEO

- Best AI Detectors Compared: GPTZero vs Originality.ai vs Turnitin

- Intelligent AI in 2026: Best Tools, Uses & Future Trends

- Best AI Writing Tools

- Complete AI Tools Directory